Perturbation Theory in Machine Learning

2024 Feb 18

In quantum mechanics there is this idea of perturbation theory, where a Hamiltonian H is perturbed by some change to become H + ΔH. As long as the perturbation ΔH is small, we can use the technique of perturbation theory to find out facts about the perturbed Hamiltonian, like what its eigenvalues should be.

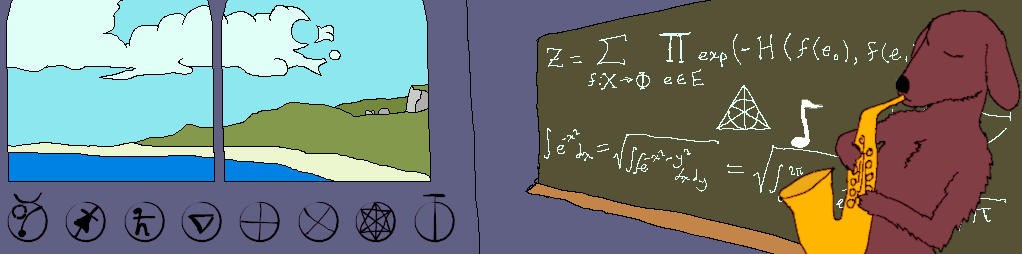

An interesting question is if we can also do perturbation theory in machine learning. Suppose I am training a GAN, a diffuser, or some other machine learning technique that matches an empirical distribution. We'll use a statistical physics setup to say that the empirical distribution is given by:

p(x) =

exp(-H(x))

| 1 |

| Z |

Note that we may or may not have an explicit formula for H. The distribution of the perturbed Hamiltonian is given by:

p'(x) =

exp(-H(x) - ΔH(x))

| 1 |

| Z' |

The loss function of the network will look something like:

𝓛 = ⟨L(x, θ)⟩

| x~p |

Where θ are the network's parameters, and L is the per-sample loss function which will depend on what kind of model we're training. Now suppose we'd like to perturb the Hamiltonian. We'll assume that we have an explicit formula for ΔH. Then the loss can be easily modified as follows:

𝓛 = ⟨L(x, θ) exp(-ΔH(x))⟩

| x~p |

If the perturbation is too large, then the exponential causes the loss to be dominated by a few outliers, which is bad. But if the perturbation isn't too large, then we can perturb the empirical distribution by a small amount in a desired direction.

One other thing to consider is that the exponential will generally increase variance in the magnitude of the gradient. To partially deal with this, we can define an adjusted batch size as:

B =

exp(-ΔH(x

))

| ∑ |

| j |

| j |

Then by varying the actual number of samples we put into a batch, we can try to maintain a more or less constant adjusted batch size. One way to do this is to define an error variable,

err = 0. At each step, we add a constant B_avg to the error. Then we add samples to the batch until adding one more sample would cause the adjusted batch size to exceed err. Subtract the adjusted batch size from err, train on the batch, and repeat. The error carries over from one step to the next, and so the adjusted batch sizes should average to B_avg.